If all microstates are equally probable, why does entropy tend to increase 3. Boltzmann's Entropy and Time's Arrow Given that microscopic physical lows are reversible, why do all macroscopic events have a preferred time direction Boltzmann's thoughts on this question have withstood the test of time. Why isnt entropy constant for a closed system 2. In this chapter we introduce the statistical definition of entropy as formulated by Boltzmann. How can the number of microstates increase if entropy increases 2. However, for anything but the most dilute of real gases, it leads to increasingly wrong predictions of entropies and physical behaviours, by ignoring the interactions and correlations between different molecules Instead one must follow Gibbs, and consider the ensemble of states of the system as a whole, rather than single particle states. Why is Boltzmann entropy equation used for configurational entropy 2.

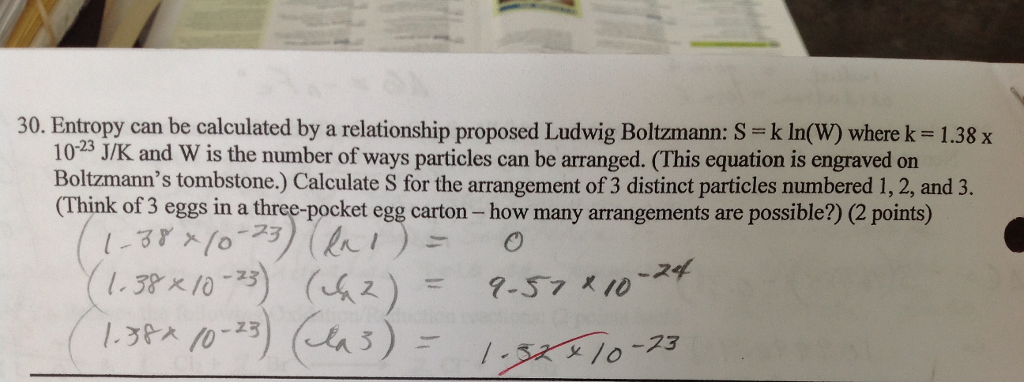

Of course this does not mean that we understand, whatever is meant by that loaded word 'understand', what time is. For the special case of an ideal gas it exactly corresponds to the proper thermodynamic entropy. 5 Boltzmann's entropy 6 Initial conditions 7 References 8 Recommended reading 9 See also What is time Time is arguably among the most primitive concepts we havethere can be no action or movement, no memory or thought, except in time. This reflects the original statistical entropy function introduced by Ludwig Boltzmann in 1872. He postulated that is proportional to the probability of the state, and deduced that a system is in its equilibrium state when entropy is a maximum. The number of available microstates increases when matter becomes more dispersed, such as when a liquid changes into a gas or when a gas is expanded at constant temperature. Boltzmann defined entropy by the formula where is the volume of phase space occupied by a thermodynamic system in a given state. Where the summation is taken over each possible state in the 6-dimensional phase space of a single particle (rather than the 6 N-dimensional phase space of the system as a whole). According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system. The probability distribution of the system as a whole then factorises into the product of N separate identical terms, one term for each particle and the Gibbs entropy simplifies to the Boltzmann entropy

In consequence, as we see in Section 20.10, the probability of a population set is proportional to its thermodynamic probability, W(Ni, gi). MS, N, E is the constant probability of any one microstate. The Boltzmann entropy is obtained if one assumes one can treat all the component particles of a thermodynamic system as statistically independent. The thermodynamic probability W(Ni, gi) N i 1 gNii Ni is the number of microstates of the population set.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed